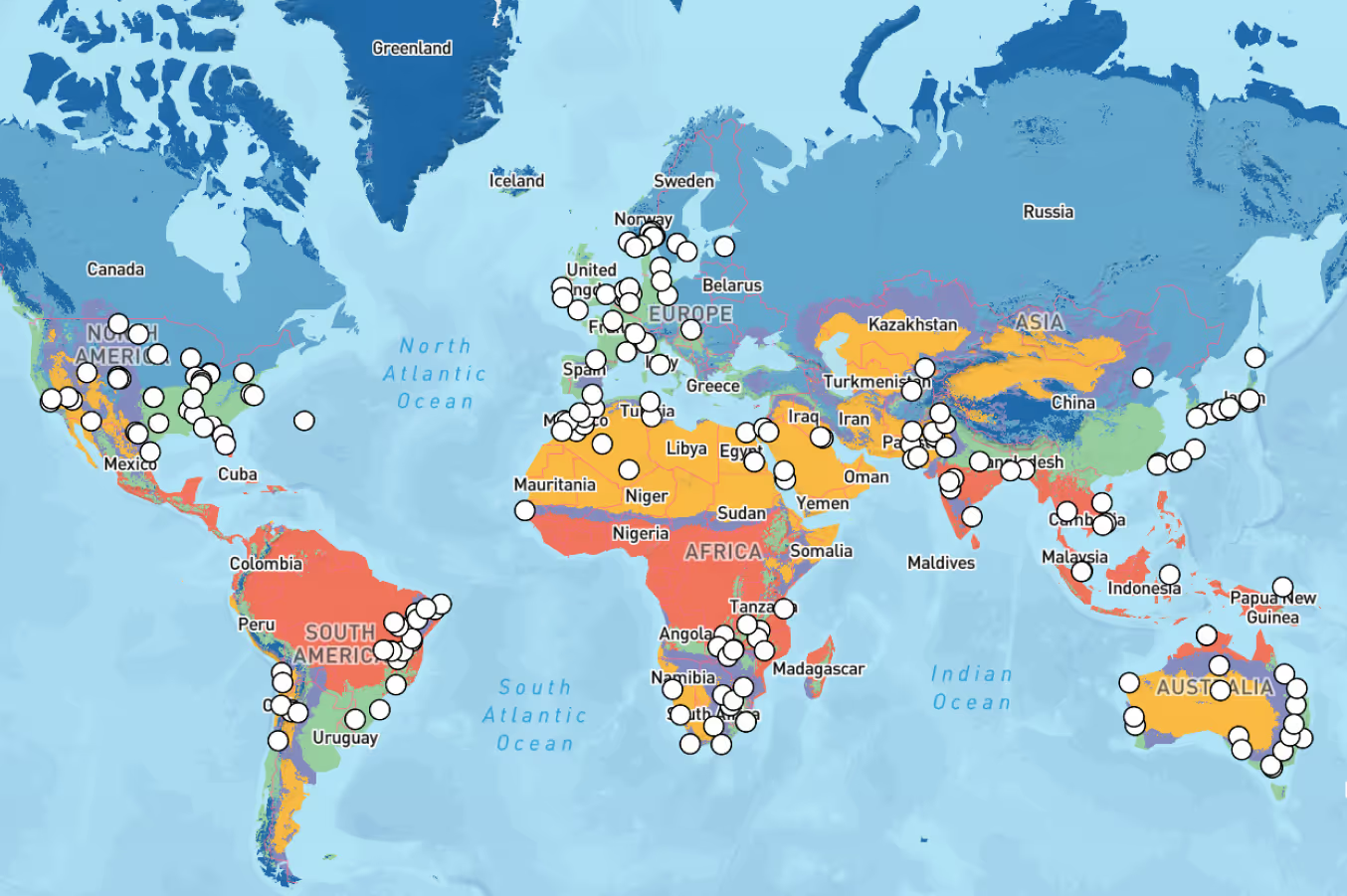

Our team work with engineers and other solar professionals all over the world every day, so we know the importance of an accurate and detailed irradiance data set, and its uncertainty. Our data scientists are always looking for additional data sources to improve our models, and therefore our reduce our error and uncertainty.

So that begs the question, why does Solcast only provide its Historical Time Series data back to 2007?

There are two major reasons for this: (1) The quality and specifications of satellite data for earlier periods is worse, and (2) Climate change is causing trends in regional and local weather patterns, making older data less valid, especially for forward-looking project planning purposes.

We’ll now dig into these two reasons a bit further.

Why does satellite data matter so much for solar resource estimation?

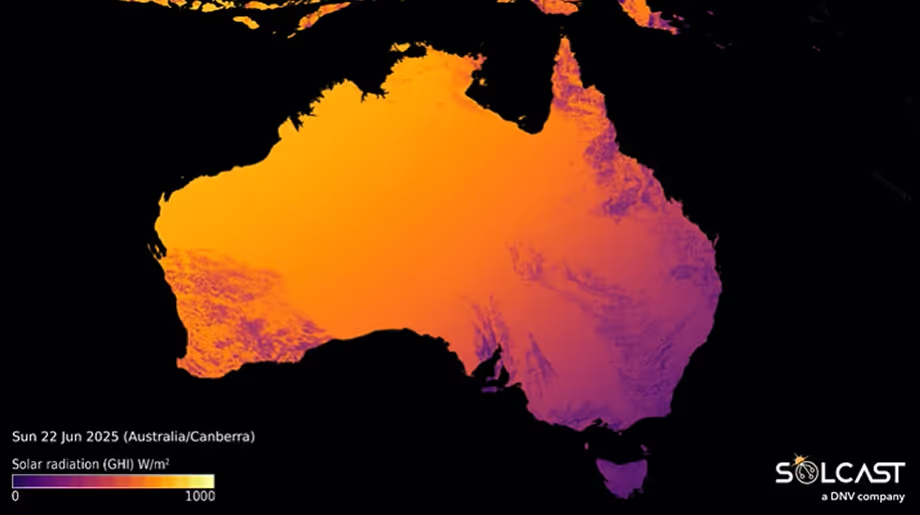

Satellite imagery with high-resolution (in space, time and spectrally) is critical for accurate and granular cloud tracking, which is essential for accurate solar irradiance estimation. That’s because for most regions of the world, clouds are the largest driver of the solar resource.

How satellite platforms have changed over time

Space sensing platforms, such as the geostationary weather satellites used by Solcast, can take more than a decade to plan, fund, develop, launch and test. So the satellite data you’re using today is based on technology that’s about 15 years old, and the satellite data from the year 2000 is based on technology from the 1980s.

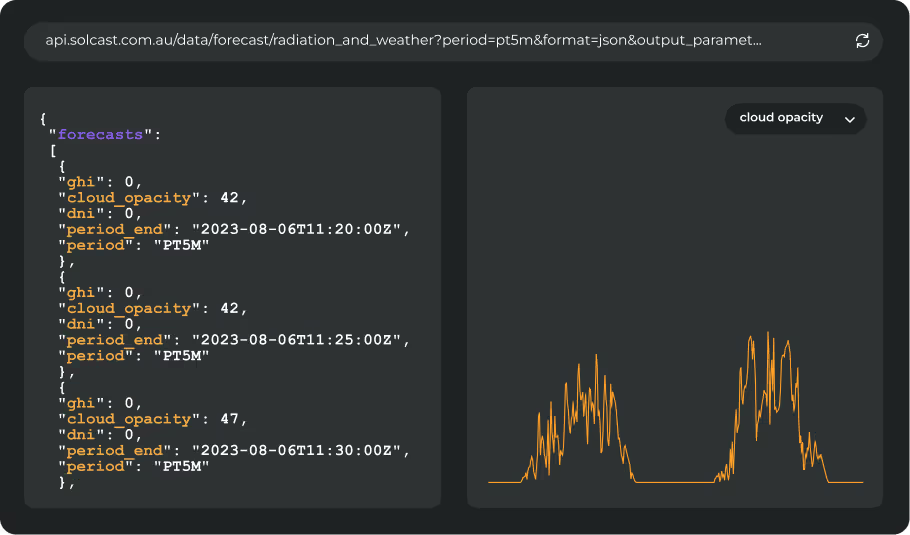

The latest (third) generation of geostationary weather satellites, launched globally between 2014 and 2022, has delivered about a 2x boost in spatial resolution, 3-6x boost in temporal resolution, and 3x improvement in spectral resolution. When you combine these improvements, it’s about a 20-30x improvement!

This comparison shows the difference between old satellite capabilities (left) and the current Himawari satellites (right).

Within the previous (second) generation of satellites, there were also significant changes, and the later part of the second generation had significantly improved capabilities. For North and South America, you can read about some of the differences in the GOES I-M satellites (launched 1994-2001) compared to the GOES N-P (launched 2006-2010). In other regions of the world, the improvements during this period were even more marked – e.g. in Asia and Australia where prior to 2007 imagery was only available once per hour! Clouds can change a lot during an hour.

In addition to these differences in resolution over time, there are also more qualitative improvements in successive newer satellites. For example, geostationary satellites are placed 35,000 km above the earth, so a little wobble in the camera image has an enormous impact. Trying to operate highly accurate cloud tracking, requires the data models to be able to compare image to image and map the movements in clouds. Doing that becomes much more difficult when data provided from the satellites is also being impacted when the image itself is moving from frame to frame. Wobble was significantly worse in older satellite platforms, so adding in that additional historic data to a new model is not likely to improve the model.

The 'bump' of the cloud from frame to frame in this GIF, shows the impact of a small wobble. Larger wobbles make it impossible to build a predictable model of cloud movement.

The climate is changing, fast!

Climate change has significantly altered global weather patterns in recent years. As things have changed, the data value of historic weather patterns decreases, because they are less likely to reflect circumstances that would occur in the current climate. Data from earlier periods may not accurately represent current and future weather patterns, impacting the quality of solar resource assessment.

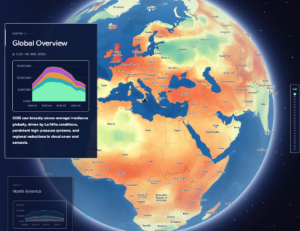

It’s well established in the scientific literature that there are real decadal-scale trends in clouds and irradiance as a result of aerosol and greenhouse gas warming related circulation changes.

This paper, from researchers at the UK’s National Centre for Atmospheric Science, shows significant trends in North America, and also across southern and eastern Europe. These trends are on a regional basis - you can imagine zooming in to the ~2km scale of satellite data and you will see even stronger trends due to local interactions of the regional aerosol and circulation changes with local coastal, topography, land surface and vegetation.

Chinese and Dutch researchers have found large trends in solar irradiance over China in recent decades due to changes in air pollution. These changes are somewhat captured in the aerosol data we use at Solcast, but it’s important to note that aerosols do not act in isolation - if you change the nature and concentration of aerosols, you change temperatures, clouds and rainfall too!

So why do people use older data for solar resource assessment?

We’re aware of two primary reasons for this.

Firstly, the inertia in industry practices. A lot has changed since the first providers started scaling up solar resource assessment datasets during the mid to late 2000s. At that time, they had to use older satellite data, because that’s all there was. And, also at that time, awareness of climate change was far lower, especially for specific parts of climate like solar resource. For these legacy providers, it would be a backwards step in terms of their ”features” to stop offering older data.

Secondly, the want to use older data comes from a desire to estimate interannual variability more accurately. Interannual variability is the year-to-year change in the solar resource, caused by factors like El Nino or the North Atlantic Oscillation, and seasonal-scale variability in regional weather patterns. If you’re estimating interannual variability, to work out how bad a very poor year might be for solar production, you’ll use a model that likes to have more input years. However, the model you use may not be valid when there’s a significant underlying trend driven by climate change.