This blog post was originally published on 22 December 2023.

This is Part III of a three-part series on evaluating irradiance and PV power accuracy. See also Evaluating accuracy I: Satellite-derived irradiance data and Evaluating accuracy II: Forecast irradiance data.

The main application of irradiance data is in PV power modelling, either in planning and design or operational applications. When assessing the accuracy of a given model, it’s important to consider that both the input data (i.e. irradiance parameters) and the power model are sources of bias and error. If you directly compare a power model to power measurements, you’re looking at an accuracy result with the combined accuracy of both the irradiance data and the power model. Understanding how to minimise these accuracy gaps, will help you make the best decision when comparing multiple data vendors and power models.

Which kind of model?

When you’re looking at different PV Power models, it’s important to start with an understanding of the different approaches to modeling how assets generate power from irradiance.

Physical Models: PV power generation is shaped by several physical processes. Robust power models therefore represent these effects explicitly, including losses from snow soiling, thermal behaviour and inverter clipping, and apply them to a base model that converts irradiance into electrical output.

Physical modelling can be implemented with varying degrees of complexity. At one end, models may describe electrical behaviour in detail, from individual modules and strings through to inverters. At the other, simpler physical models can deliver reliable baseline results using a limited set of site parameters, such as capacity, orientation, tilt and efficiency. The appropriate level of detail depends on the application and the quality of available site information.

For use cases that require tighter alignment with measured site behaviour, Premium PV Power builds on this physical foundation. It combines physics‑based models with site‑specific learning from cleaned historical generation data, using ensemble techniques and hybrid approaches to improve forecast robustness and deliver probabilistic outputs.

Machine Learning Models: AI and ML models can be good at modelling complicated systems where the external factors aren’t well understood, but often aren’t good at modelling factors with outsized impacts. External factors like eclipses, snow soiling, dust soiling, inverter clipping etc. are often misunderstood by machine learning models when those factors aren’t treated explicitly. In theory, their empirical or machine learning models should be capable of noticing and predicting events like snow soiling or inverter clipping, however this requires a long training period which isn’t often feasible, and requires the model to have access to the appropriate input data and features.

For operational forecasting, Solcast’s approach to PV power modelling is based on physics. By using explicit plant and system specifications, such as layout, orientation, tracking configuration and inverter limits, physical models provide a transparent and repeatable baseline forecast without relying on long histories of measured power output.

This approach is suited to operational use. PV measurement records are often short or incomplete, and frequently affected by non‑meteorological factors such as curtailment, outages, commissioning effects and data‑quality issues. Physical modelling avoids fitting these artefacts, while allowing performance to be assessed consistently across sites and portfolios.

For resource assessment modelling, we recommend DNV’s SolarFarmer model, a more complex and explicit 3D physical modelling tool.

The next step is to start reviewing the available information from the vendors you’re assessing.

Reviewing the Vendor

Review the vendors’ documentation and published accuracy information

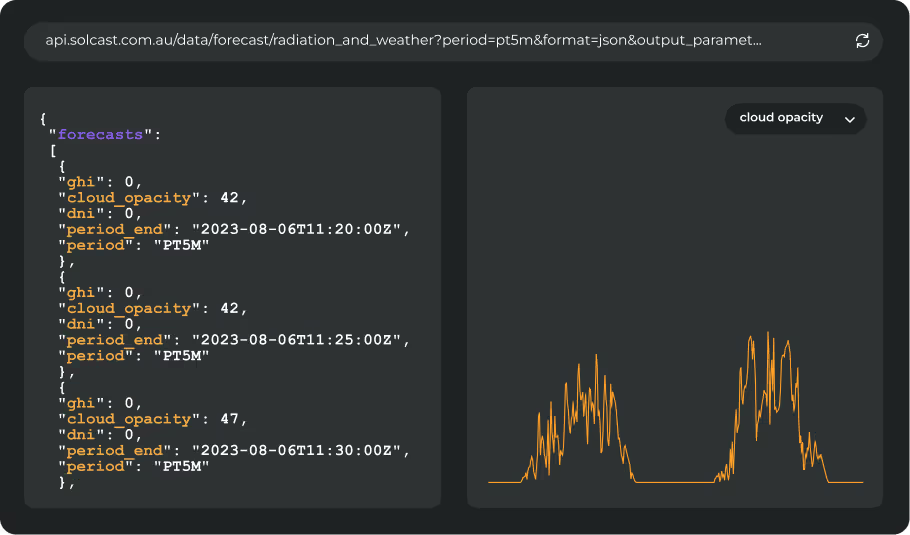

Data vendors should make information about their models and accuracy validation available and easy to find. This documentation should include a description of what kind of model you are looking at, and the site metadata that is required. You don’t want to select a model and then realise that you don’t have the site detail required as inputs into the model.

To make sure you end up with an accurate model, make sure the model information explains:

- Input parameters for site metadata

- Outputs for irradiance as well as power

- How the model explicitly treats soiling, inverter clipping and other losses

Look for information about how the models are built, what level of academic rigour has been applied and where it has been validated. Solcast publishes information about our models for the Rooftop PV model and Advanced PV Power model on our website. On those pages you can see our models are based on models built and published through university research projects, including our work through the Australian National University in a $2.6m ARENA industry research project.

Review customer feedback, references and commercial applications

Running your own trial is time intensive, especially for forecast data. Our experience working with hundreds of organisations to run trials is that a forecast trial takes 1-2 Data Scientists or Engineers 4-6 weeks of work, plus additional time for the forecast trial duration, to draft a full report. You can cut down on much of this effort by reviewing existing commercial applications of the vendor’s data. Look for other organisations that share your use case, and ask the vendor how the data and models are being used in each case.

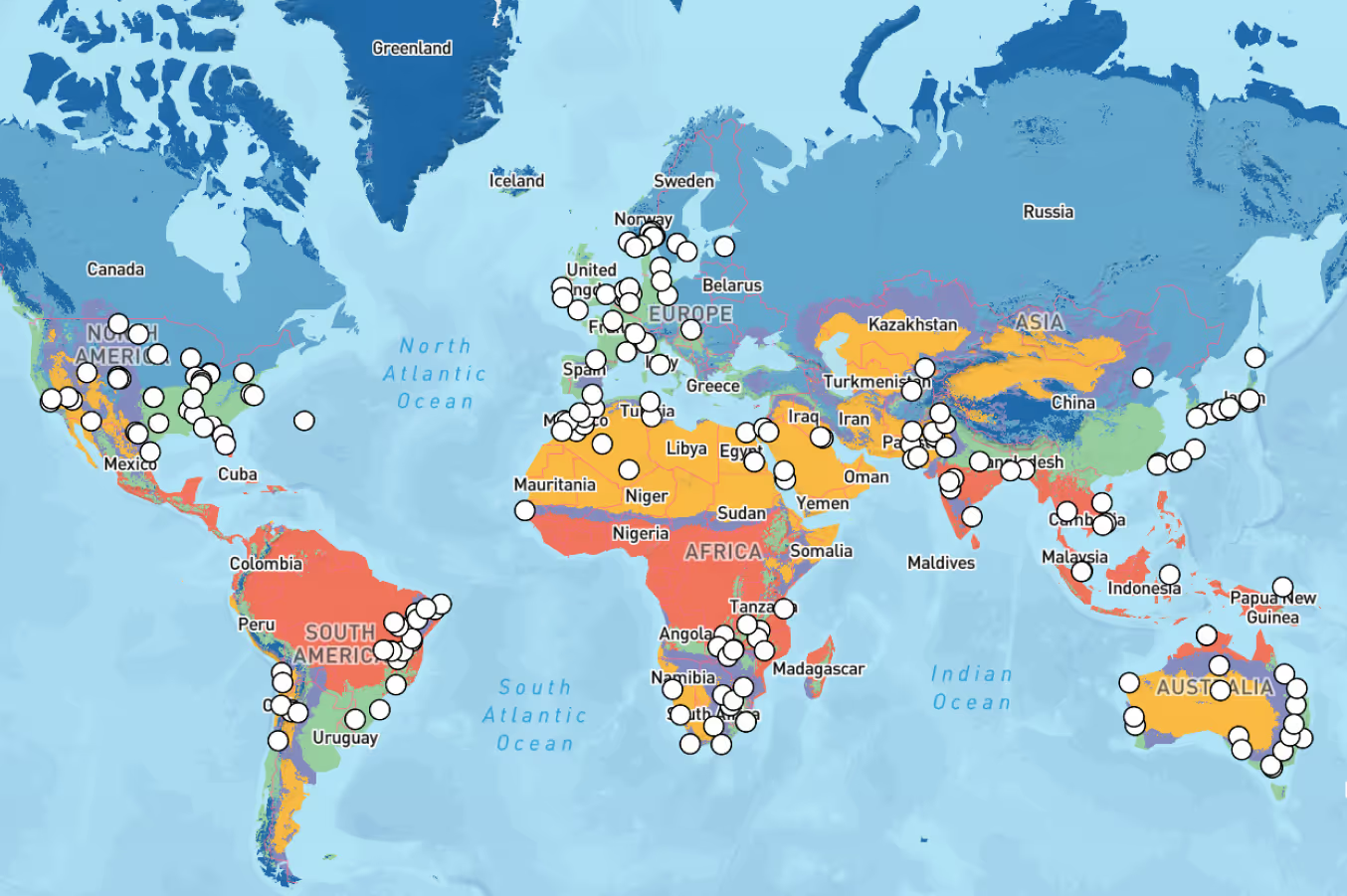

Solcast’s data and PV power model is in operational use globally by a range of Grid Operators, Utilities and load forecasters in Australia, Taiwan, Korea, US, UK and Germany. Each deployment of the model has included local calibration and validation in concert with users. Our team are happy to provide references and details of how our model is used in each case, without sharing commercial-in-confidence insights from our existing customers.

Once you’ve reviewed these materials from each vendor, you might choose to proceed to running your own assessment. These can be lengthy and time consuming, so in operational use cases, many organisations choose to operate a trial rather than a full comparative accuracy assessment. If you proceed to an assessment, we’ve outlined some steps to follow to ensure your analysis is as smooth and easy as possible.

Running your own assessment

See our previous articles on evaluating accuracy if you think this is right for you. As noted in those articles there are some key considerations when thinking about performing your own accuracy validation. These are summarised in the key points below:

- Refreshing yourself on the vendor’s methodology. Depending on the vendors you are planning on assessing their methodology may have an impact on how you need to perform the assessment.

- Understand the scope of the assessment. An accuracy assessment is a significant undertaking so it is important to consider a number of different factors to ensure that you get the insights you are looking for.

- Note that the scope increases significantly with the additional complexity of a forecast trial.

- Define assessment parameters. Once you have established the scope required it is important to establish the criteria under which you will assess potential vendors.

Dealing with PV Power

Unlike irradiance, PV power modelling depends on site‑specific configuration. The physical characteristics provided to the model must accurately reflect the plant as built. If they do not, the resulting output will not be representative of actual performance, undermining any assessment of accuracy.

Purely statistical approaches typically depend on long, uninterrupted histories of uncurtailed measurements. Physical models, by contrast, rely primarily on site configuration to produce a sensible baseline result. In Premium PV Power, this physical baseline is enhanced using site‑specific learning from historical generation data, allowing systematic residual behaviour to be addressed while preserving a strong physical grounding.

Any misalignment between the real plant and the configuration supplied to the model will distort both deterministic forecasts and probabilistic outputs.

Setting Basic Site Configuration:

In some cases, site parameters may be uncertain. Information may be missing, unavailable, or no longer reflect the installed plant. Comparing modelled output with historical production data can help determine whether the assumed configuration is reasonable under normal operating conditions.

Because non‑meteorological effects often obscure this comparison, periods affected by curtailment, outages or other operational constraints should be excluded, and irradiance measurements used where available. In Premium PV Power, cleaned historical generation data is used alongside physical modelling to capture site‑specific behaviour more reliably. This hybrid approach supports probabilistic forecasting and operational decision‑making, including dispatch and bidding.

Tuning Specific Site Configurations:

In operational forecasting, it is often necessary to reflect constraints that are unrelated to weather. Solcast therefore allows users to apply overrides at query time to represent deviations from normal operation, such as reduced inverter availability, outages or curtailment. This enables forecasts to reflect known operational conditions without altering the underlying site configuration.

Assessing Accuracy

Once you have accounted for the additional complexities of that come with assessing PV power accuracy, it is important not to forget the other key steps in ensuring an accurate assessment as discussed in the earlier articles in this series, assessing historical irradiance data and assessing forecast data.

Aligning Timeseries:

This ensures that each data point represents that same period by matching timezones and temporal resolution.

Applying Quality Control:

Removing periods of invalid measurements that would corrupt your assessment. This commonly needs to be done on measurement data that has missing or corrupted data points.

Evaluating Against Assessment Criteria:

Returning to the assessment criteria you defined earlier and performing qualitative and/or quantitative assessment to ensure that a vendor meets your requirements.

Missed the earlier parts? Start with Evaluating accuracy I: Satellite-derived irradiance data and Evaluating accuracy II: Forecast irradiance data